Something uncomfortable happened in Virginia, West Virginia, and Kansas City over the past two years.

Utilities that had already scheduled coal power plants for retirement quietly kept them running. The reason was the explosive growth of AI data centers drawing so much power from regional grids that aging fossil fuel plants had to stay online just to keep up.

The same story unfolded in Ireland, where data centers now consume around 21% of national electricity, and that figure could reach 32% by 2026. In Mexico and parts of Europe, communities began protesting new data center construction, citing water shortages, local air quality, and strain on the power grid.

The generative AI boom has a carbon problem that grew too large to ignore.

Data centers are responsible for just over 1% of global electricity demand globally, and AI’s share of that is growing fast. The carbon footprint of AI systems alone could reach between 32.6 and 79.7 million tons of CO₂ emissions in 2025, while the water footprint could reach 312.5 to 764.6 billion liters.

To put that in perspective: New York City emitted 52.2 million tonnes of CO₂ in 2023. AI could end up with a climate footprint comparable to that of a major world city.

CTOs at AI companies, cloud providers, and enterprise technology firms are now under real pressure, from regulators, investors, sustainability teams, and local communities, to answer a question that keeps getting harder to sidestep: how do you keep building better AI without burning through the planet?

This is the story of what they are actually doing about it.

TL;DR

- Global data centers consume roughly 415 TWh of electricity every year, and AI is the fastest-growing driver of that number.

- AI’s carbon footprint could reach 32.6 to 79.7 million tons of CO₂ in 2025 alone, comparable to all of New York City’s emissions.

- Training a single GPT-3-scale model released 552 metric tons of CO₂ and consumed around 700,000 liters of cooling water.

- CTOs are cutting AI’s carbon footprint through carbon-aware scheduling, efficient model architectures, renewable energy contracts, and next-generation cooling systems.

- Green AI is shifting from a PR talking point to a real engineering and business priority.

Why Training Large AI Models Consumes So Much Energy

Massive GPU Clusters Running for Weeks at a Time

Training a large language model is a fundamentally different kind of computing task. It requires tens of thousands of specialized chips, mostly NVIDIA GPUs, running intensive calculations continuously for weeks or sometimes months at a stretch.

The computational power required to train generative AI models that often have billions of parameters can demand a staggering amount of electricity, which leads to increased carbon dioxide emissions and pressures on the electric grid.

In a 2021 research paper, scientists from Google and the University of California at Berkeley estimated the training process alone for GPT-3 consumed 1,287 megawatt hours of electricity, enough to power about 120 average U.S. homes for a year, generating about 552 tons of carbon dioxide.

And that was just one training run for a model that is already considered outdated. The models being trained today are dramatically larger.

“A single ChatGPT query consumes almost 10 times the electricity of a standard Google search. Multiply that across billions of daily queries, plus the training that produced the model behind those queries, and the scale of the problem becomes clear.”

Cooling Systems and Water Which Disappears

The chips generate enormous heat. Getting that heat out of the building requires cooling infrastructure that draws almost as much energy as the servers themselves.

In some cases, data centers consume up to 5 million gallons of water per day, the equivalent of a small town’s daily use.

Li et al. (2023) estimated around 700,000 liters of cooling water for a single GPT-3-scale training run.

Google’s data center water consumption increased by nearly 88% between 2019 and 2024, driven directly by AI expansion. In drought-prone regions like California and Arizona, that is a resource conflict that local communities are increasingly unwilling to accept quietly.

The IEA estimated that data centers used a total of 560 billion litres of water in 2023. But drawing on corporate sustainability reports from Apple, Google, and Meta, researcher Alex de Vries-Gao shows that this indirect water use is significantly underestimated and is probably a factor of three to four higher than the official estimate.

Exponential Compute Demand and Scaling Laws

The architecture driving this consumption is straightforward: larger models trained on more data tend to produce better results. This principle, known as scaling laws, has guided AI development since 2017. It has also been the primary engine of energy demand growth.

Some tech companies report annual growth of over 100% in computing demand for AI training and inference.

The IEA central scenario projects data center electricity consumption rising to 945 TWh by 2030. AI’s share of data center power use was roughly 5% to 15% recently, and could reach 35% to 50% by 2030.

Many new AI data centers require 100 to 1,000 megawatts, equivalent to the demands of a medium-sized city. Grid operators in some regions face connection lead times of over two years just to connect new facilities to clean energy supplies. When clean energy supply falls short, some regional utilities have restarted retired coal plants to meet data center loads.

The Rise of Green AI – What It Actually Means

For most of AI’s recent history, the industry ran on a simple principle: bigger models, more data, more compute, better results. Researchers coined the term “Red AI” to describe this brute-force approach.

Green AI is the countermovement, and it asks a different question. Can you achieve the same results with dramatically less compute, less energy, and less carbon?

The shift is being pushed by three forces converging at once. Energy costs are becoming a serious operational expense at scale, going from a rounding error to a meaningful line item. Regulatory pressure is building, particularly in the EU, where the EU AI Act will require large AI systems to report energy use, resource consumption, and other life cycle impacts. And efficiency is becoming a genuine competitive advantage, the team that trains equally capable models faster and cheaper wins.

The emergence of DeepSeek V3 in late 2024 was a wake-up call for Western AI labs. It reportedly achieved competitive performance at a fraction of the training cost of comparable US models. That single development proved that brute-force compute is one path forward, but certainly not the only one.

How CTOs are Reducing the AI Carbon Footprint

1. Carbon-Aware Model Training: Scheduling Smarter, Running Cleaner

One of the most immediately actionable changes does not require new hardware or new algorithms. It requires scheduling.

Carbon-aware computing means running energy-intensive training jobs when and where the electricity grid is cleanest. Solar energy peaks in the afternoon. Wind energy varies by region. Grids in Iceland, Norway, Quebec, and parts of the US Pacific Northwest run predominantly on hydroelectric and renewable sources. The same training job run in West Virginia versus Oregon might produce dramatically different emissions even with identical runtime.

Google can now shift moveable compute tasks between different data centers, based on regional hourly carbon-free energy availability. This includes both variable sources of energy such as solar and wind, as well as always-on carbon-free energy sources.

Google Cloud’s Carbon-Aware Compute feature allows users to schedule their workloads to run during periods when the carbon intensity of the grid is lowest.

The Green Software Foundation’s Carbon Aware SDK makes this approach available to engineering teams well outside the hyperscale world. A CTO at a mid-size AI company can implement time-shifted training today, queuing large jobs for the renewable energy window, letting the scheduler handle the rest, with minimal impact on delivery timelines and meaningful impact on emissions.

Temporal load shifting to solar hours between 10am and 4pm can reduce carbon intensity by around 40% with zero hardware changes. That is the kind of gain that shows up in a sustainability report and costs almost nothing to implement.

2. More Efficient Model Architectures: Precision Over Volume

The brute-force era is giving way to something more sophisticated.

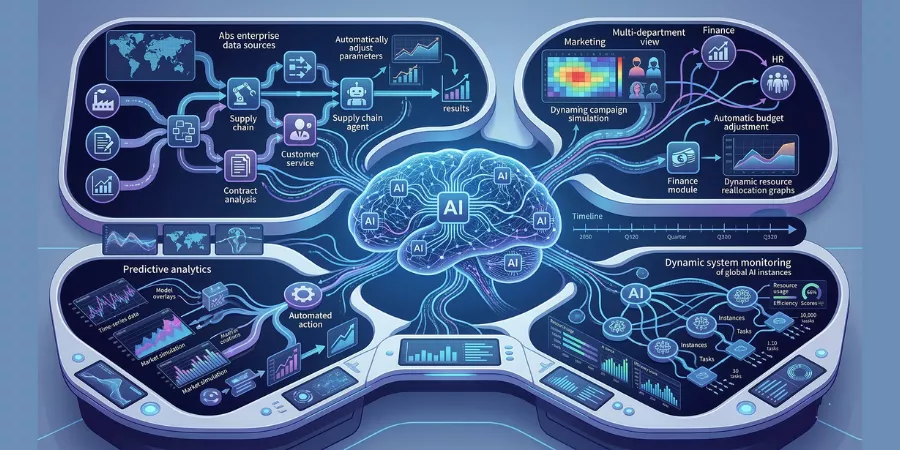

Mixture-of-Experts (MoE) architectures are one of the most significant shifts in how large models are now being designed. Instead of activating every parameter in a model for every query, MoE models selectively activate only the subset of parameters needed for a specific task. The total parameter count might be large, but the active compute per query shrinks dramatically.

Sparse models, quantized models, and domain-specific fine-tuned models are all part of the same trend: precision over volume. A 7-billion-parameter model fine-tuned specifically for medical coding can outperform a 70-billion-parameter general model on that narrow task, and uses a fraction of the energy.

Google reports a 33x reduction in energy and 44x reduction in carbon for the median Gemini prompt compared with 2024. That extraordinary efficiency gain did not come from building a bigger model. It came from building a smarter one.

BLOOM, from Hugging Face, trained with more efficient chips and only released 25 metric tons of CO₂, compared to GPT-3’s 552 metric tons, a difference of more than 20x for a comparable model class.

The lesson for engineering leaders: before approving a large-scale training run, ask whether a smaller, fine-tuned, or MoE model could achieve equivalent results for the actual use case. The efficiency gains are often 10x or more for domain-specific applications.

3. Specialized AI Chips and Hardware: Performance Per Watt Matters Now

Hardware selection has quietly become an environmental decision, alongside being a performance and cost decision.

Since the 1940s, the energy efficiency of computation has doubled every 1.6 years. Purpose-built AI accelerators, TPUs, NPUs, and the latest generation of NVIDIA GPUs, take advantage of this curve. They are designed to do exactly what LLM training and inference require: massive matrix multiplication at scale, with far less overhead than general-purpose computing.

Google’s data centers deliver over six times more computing power per unit of electricity than they did just 5 years ago.

AI chips use more energy and emit more heat than traditional CPU chips, but AI models with efficiently implemented architectures or trained on more efficient chips can significantly reduce energy use.

Google’s Ironwood TPU is claimed to be 30 times more energy-efficient than the company’s first publicly available TPU. The practical implication: a CTO choosing between GPU generations or between GPU and TPU is implicitly choosing a carbon trajectory for every training run that follows.

4. Renewable-Powered Data Centers: The Scale of Investment Is Real

The hyperscalers have made enormous commitments. The implementation is uneven, but the scale of real investment is significant.

Since 2010, Google has signed over 170 agreements to purchase more than 22 gigawatts of clean energy worldwide and invested over $3.7 billion in clean energy projects and partnerships, maintaining a 100% renewable energy match on a global basis every year since 2017.

In 2024 alone, Google signed agreements for more than 8 GW of clean energy, the highest annual volume of clean energy in the company’s history. This includes partnerships for solar in Taiwan, small modular nuclear reactors with Kairos Power, and geothermal energy projects.

Microsoft’s Chief Sustainability Officer Melanie Nakagawa has stated: “To achieve our goal of becoming carbon negative by 2030, we will need a broad range of innovative carbon-free energy solutions. Advanced nuclear energy and fusion energy are included in our multi-technology approach.”

Meta aims to reach net-zero emissions across its entire value chain by 2030, adding 15 gigawatts of new clean energy capacity and partnering with energy companies to power new data centers from renewable sources including solar and wind.

Geography matters here too. Meta’s data center in Lulea, Sweden, benefits from naturally cool ambient temperatures and proximity to hydroelectric power, reducing both cooling and emissions costs significantly compared to US desert locations.

The honest complication: Google’s carbon emissions rose 48% over the past five years and Microsoft’s by 23.4% since 2020, largely due to cloud computing and AI. Renewable energy commitments are real, but AI’s growth is currently outpacing clean energy deployment timelines.

5. Smarter Cooling Technologies: The 30% Problem

Cooling can account for 30 to 40% of a data center’s total energy consumption. That makes it one of the highest-leverage areas for efficiency improvement.

Immersion cooling, submerging servers directly in a non-conductive dielectric fluid, eliminates the need for fans entirely. Advanced liquid cooling pipes coolant directly to chips rather than cooling the surrounding air. Both approaches reduce cooling energy use by up to 50% compared to traditional air-cooled facilities.

The power usage effectiveness (PUE) ratio measures how efficiently a data center uses energy, a PUE of 1.0 means every watt powers computation, with zero wasted on overhead. The industry average sits around 1.5. Google’s infrastructure delivers world-leading efficiency through aggressive investment in thermal management at every level of the stack.

A January 2025 US Executive Order launched a Department of Energy and EPA “Grand Challenge” to push the PUE ratio below 1.1 and minimize water usage in AI facilities, signaling that efficiency is becoming a regulatory expectation, not just an industry aspiration.

Case Studies: What Companies Are Actually Building

Google’s Carbon-Intelligent Platform

Google has been running a carbon-intelligent computing platform since 2020, automatically shifting flexible compute workloads to times and locations where the electricity grid carries the lowest carbon intensity.

The median Google Gemini text prompt in 2025 consumes about 0.24 Wh of electricity. The energy use is comparable to watching roughly nine seconds of TV. The water use is roughly five drops.

That is the result of software efficiency improvements combined with aggressive clean energy procurement. Google’s central fleet program in 2024 helped avoid procurement of new components and machines with an embodied impact equivalent to approximately 260,000 metric tons of CO₂e, roughly equivalent to avoiding 660 million miles driven by an average gasoline-powered passenger vehicle.

Microsoft’s Multi-Technology Net-Zero Strategy

Microsoft has committed to becoming carbon negative by 2030, meaning it will remove more carbon than it emits. The strategy depends on combining renewable energy procurement with advanced nuclear energy, exploring emissions budgets as a governance mechanism, and connecting engineering decisions to sustainability outcomes at the infrastructure level.

In 2025, Microsoft, Google, Amazon, and Meta are projected to spend a combined $320 billion on AI infrastructure, more than double the $151 billion spent in 2023. The sustainability commitment has to operate at that same scale.

Meta’s Location Strategy and Water Commitment

Meta’s deliberate choice to build data center infrastructure in northern Sweden reflected a clear sustainability logic: colder climate means lower cooling energy, regional grid means cleaner electricity, proximity to hydroelectric power means lower carbon intensity per kilowatt-hour.

Meta aims to be water-positive by 2030, meaning it will restore more water to local ecosystems than it consumes. Whether that commitment holds as AI compute demands scale remains one of the more interesting tests in sustainable AI infrastructure.

The Future of Sustainable AI Infrastructure

Several trends are converging to reshape the landscape over the next five years.

- Regulatory pressure will intensify. The EU AI Act will require large AI systems to report energy use, resource consumption, and other life cycle impacts. A January 2025 US Executive Order directed the Department of Energy to draft reporting requirements for AI data centers covering their entire lifecycle, including metrics for embodied carbon, water usage, and waste heat. CTOs who have yet to start measuring their ML carbon footprint are going to be required to start. Building the measurement infrastructure now, before regulatory deadlines arrive, is the smart move.

- Efficiency benchmarks will become competitive differentiators. The concept of publishing “carbon per benchmark point” or “CO₂ per useful output” metrics alongside model performance numbers is gaining traction in research. When those metrics become standard, model efficiency becomes public, and buyers, enterprises, and regulators will use them.

- Nuclear is entering the AI infrastructure conversation seriously. Amazon, Google, and Microsoft have collectively committed over $10 billion to nuclear investments including small modular reactors expected to come online between 2028 and 2030. Nuclear offers what solar and wind cannot: high-density, always-on, zero-carbon power that keeps AI training clusters running without grid variability.

- AI efficiency gains may compound faster than demand growth. Google’s improvements in software efficiency and clean energy procurement reduced energy use by a factor of 33 and carbon emissions by a factor of 44 for a typical Gemini prompt over a single year. If that pace of efficiency improvement continues, through better architectures, better chips, and better scheduling, the emissions curve could bend meaningfully even as output scales.

By 2030, AI could represent 35% to 50% of total data center electricity usage. Whether that energy is clean or dirty will be one of the most consequential infrastructure decisions made this decade.

What CTOs Should Do Right Now

These are practical steps available today, ranked by impact and speed to implement.

- Measure first, manage second. Tools like CodeCarbon, ML CO₂ Impact, and cloud provider sustainability dashboards make it possible to attach carbon estimates to individual training runs. Establish a baseline before setting reduction targets.

- Implement carbon-aware job scheduling. APIs like WattTime and ElectricityMaps provide real-time carbon intensity data. Queue large, non-urgent training jobs for low-carbon grid windows. The latency cost is minimal. The emissions reduction is real.

- Evaluate model architecture before approving new training runs. Before spinning up a large-scale training job, ask whether a smaller fine-tuned model or an MoE architecture could achieve equivalent results for the actual use case. Efficiency gains of 10x or more are common for domain-specific applications.

- Make hardware procurement a sustainability decision. Evaluate AI chips and GPUs on performance-per-watt alongside raw throughput. The total cost of ownership calculation now includes energy costs over the hardware lifecycle.

- Connect engineering teams with sustainability teams. In most organizations, the team making AI infrastructure decisions and the team reporting on carbon emissions operate in separate silos. Bridging that gap, embedding sustainability metrics into engineering roadmaps, is the structural change that makes every other intervention sustainable beyond a single quarter.

- Pressure cloud providers for granular data. Ask for carbon metrics by workload, by region, and by hour. Demand carbon-aware routing as a default. Hyperscalers respond to enterprise customers with significant spend.

5 Lessons From the Green AI Movement

These are the insights that the most forward-thinking engineering leaders have learned, often the hard way.

- Lesson 1: Scheduling is the cheapest green AI investment you will ever make. Carbon-aware scheduling requires no new hardware, no new models, and no major infrastructure overhaul. It requires a queue and a carbon intensity API. Temporal load shifting to solar hours can cut carbon intensity by around 40% with near-zero implementation cost. Most teams have simply never prioritized it.

- Lesson 2: Efficiency improvements in AI are compounding faster than most forecasts predicted. Google’s 33x energy reduction per Gemini prompt in a single year is extraordinary. A few years ago, that would have seemed implausible. The combination of architectural improvements, chip efficiency gains, and smarter scheduling is producing efficiency curves that are outpacing even optimistic projections. CTOs who are waiting for hardware to solve this problem are missing the gains already happening in software.

- Lesson 3: Location is a sustainability variable, not just an operational one. Where a model is trained matters enormously. The same training job in a coal-heavy grid versus a hydro-powered one can produce 10x the carbon emissions. The geography of AI infrastructure is a sustainability decision. Teams that are choosing data center regions purely on latency and cost are leaving emissions reductions on the table.

- Lesson 4: Carbon credits mask the problem rather than address it. Several major AI companies have claimed carbon neutrality while their actual measured emissions rose by 23% to 48%. The reliance on market-based instruments rather than actual reductions in fossil fuel use has been widely criticized by environmental experts. CTOs who are relying on renewable energy credits as their primary sustainability strategy are building on a foundation that regulators and sophisticated enterprise customers are beginning to challenge.

- Lesson 5: The next frontier of AI competition is efficiency, and sustainable AI is where it starts. The DeepSeek moment proved that brute-force computation is one path to model capability, not the only one. The team that can train equally capable models at 10% of the energy cost will have a structural cost advantage that compounds over time. Green AI and competitive AI are converging into the same engineering goal. CTOs who treat them as separate problems are solving an outdated version of the challenge.

Author’s Opinion

In my view, we have focused far too much on the “magic” of AI and not enough on the “machinery” behind it. The environmental cost of a single training run can be equivalent to hundreds of flights.

As someone who sees the potential for AI to solve climate issues, it would be a tragedy if the tool we build to save the world is the same one that accelerates its warming. Efficiency is the only way to ensure AI remains a net positive for humanity.

Frequently Asked Questions

What is Green AI?

Green AI refers to designing and deploying artificial intelligence systems in ways that minimize their environmental impact. This means using energy-efficient model architectures, running training workloads during renewable energy windows, selecting hardware for energy performance per useful calculation, and measuring the carbon cost of every training run rather than ignoring it.

Why Does AI Model Training Produce Carbon Emissions?

AI training requires massive clusters of GPUs running continuously for weeks. These chips draw enormous electricity, much of it still coming from grids partly powered by fossil fuels. The heat they generate requires energy-intensive cooling systems that add to total power draw. When that electricity comes from coal or gas, it produces CO₂ proportional to the grid’s carbon intensity.

How Can Companies Reduce AI’s Environmental Impact?

Five main levers are available and deployable today:

- Train on efficient model architectures, MoE, sparse models, fine-tuned smaller models, that achieve equivalent results with less compute

- Use carbon-aware scheduling to time workloads to low-carbon grid windows

- Select purpose-built AI chips evaluated on performance-per-watt alongside raw throughput

- Locate or route compute toward renewable-powered data center regions

- Measure and report ML carbon metrics to create internal accountability

Which Industries are Leading Sustainable AI Efforts?

The clearest progress is happening at cloud hyperscalers (Google, Microsoft, Amazon), large AI research labs (Meta AI, Google DeepMind), and chip manufacturers (NVIDIA, Google with TPUs). The Green Software Foundation is making carbon-aware tooling available to teams without hyperscale infrastructure or procurement teams.

Can AI Become Genuinely Carbon Neutral?

The honest answer is: not quickly under current trajectories. The IEA central scenario projects data center electricity consumption rising to 945 TWh by 2030, just under Japan’s entire annual energy consumption.

Renewable energy expansion, while significant, is running behind AI’s growth rate. Grid operators face connection lead times of over two years to connect new facilities to clean energy supplies. Carbon offsets face growing credibility questions from regulators in both the EU and US.

The more durable path runs through efficiency. The DeepSeek example demonstrated that training at 5 to 10% of the compute cost of comparable models is achievable. When that becomes standard rather than exceptional, the emissions math changes completely.

Conclusion

For years, the AI arms race ran on a simple principle: whoever had the most GPUs, the most data, and the most computers won.

That principle is under real pressure now, from energy costs, from regulatory scrutiny, from communities pushing back on data center expansion, and from engineering teams at places like Google demonstrating that 33x efficiency improvements in a single year are achievable when efficiency becomes a genuine priority.

The industry is on an unsustainable path, but there are ways to encourage responsible development of generative AI that supports environmental objectives.

The next phase of AI competition may be less about who builds the most powerful model and more about who builds the most efficient one. The engineers who figure out how to push model performance forward while bending the carbon curve downward are defining what the next decade of AI infrastructure looks like.

Green AI is, at its core, an engineering problem. It has real, measurable, deployable solutions available right now. The teams treating it that way are already building the infrastructure that the next generation of AI will run on.